The term “social media bot” is no longer just associated with chatbots or customer service AI. Rather, social media bots have a far more unseemly reputation nowadays due to malicious misinformation campaigns.

But what exactly are these malicious social bots? How do you spot the different types? And are there tools that can help you sort real accounts from the fakes? Here’s what you need to know…

What Are Malicious Social Media Bots?

While there are different types of bots on social media platforms, we will be focusing on malicious political and malware bots. These bots differ from customer service bots or other automated accounts. For example, some bots such as Deep Question Bot are meant to be a fun tool for Twitter users to use. Meanwhile, bots like Thread Reader App turn Twitter threads into a page of text.

Malicious social media bots and fake accounts, however, pose as human users. They aim to manipulate public opinion on social media

How Social Media Manipulates You and Your Opinions

How Social Media Manipulates You and Your Opinions

Social media sites are pulling your strings. And here are the ways social media manipulates you and your opinions…

Read More

, spread fake news, increase polarization, sow distrust in institutions, spread government propaganda, and propel conspiracies.

According to the Academic Society, intent makes malicious bots different from other automated accounts.

“Malicious bots, in contrast, are designed with the purpose to harm. They operate on social media with a fake identity. Malicious bots include spam, the theft of personal data and

identities, the spreading of misinformation and noise during debates, the infiltration of companies and the diffusion of malware,” the organization says in its 2018 guide on the topic.

Bots achieve this by boosting certain hashtags and keywords, deploying targeted harassment, and sharing certain links and articles.

According to a 2017 working paper by the University of Oxford, titled “Computational Propaganda Worldwide: Executive Summary“, the people behind these bots range from small fringe groups to large political campaigns and governments.

Twitter is the most notorious platform dealing with social media bots, but these malicious bots also exist on Facebook, Reddit, Weibo, and other smaller networks.

Do Malicious Bots Support Specific Politics?

While these bots were notably used in the 2016 US election and the leadup to the Brexit referendum, they aren’t only aimed at one side of the political spectrum.

A paper published in Nature, titled “The spread of low-credibility content by social bots“, found that a common denominator found among many malicious bots is the sharing of low-credibility content—such as fake news and misinformation. This misinformation targets different sides of the political spectrum.

“Successful low-credibility sources in the United States, including those on both ends of the political spectrum, are heavily supported by social bots,” the paper says. “Since the earliest manifestations uncovered in 2010, we have seen influential bots affect online debates about vaccination policies and participate actively in political campaigns.”

While bots as a whole aren’t partisan, usually individual bot accounts will stick with one type of viewpoint to promote (such as an anti-science view).

Different Types of Malicious Social Bots

When it comes to fake social media accounts aimed at amplifying political views and sharing misinformation, there are a few different types. This depends on their level of automation and their main aims.

We take a look at the different bots and explain each type…

Standard/Full Bots

A standard social media bot is an account that is fully automated. These accounts have no human input in their daily posts and operation. Rather, they rely on algorithms and scripts to guide their posts.

These bots amplify content (retweet bots) or reply to content with certain keywords or hashtags (reply bots).

Malware Bots

Malware bots are another type of fully automated malicious bot. However, rather than focusing on misinformation, they aim to compromise the security of social media users. These accounts often focus on clickbait content, sometimes posing as an existing content publisher, to try redirect users to a malicious website.

Cyborgs

A cyborg is a partially automated or hybrid account. The ratio of bot-to-human posts on a specific cyborg account varies, but automation needs to be significant (rather than an occasional automated post).

These accounts use human input to help obscure the fact that they are bots. Human input can help guide replies, perform targeted harassment, or add more human-like behavior.

Cyborgs are not the same as human users who may use schedulers like TweetDeck for their posts. Cyborgs are fake accounts posing as a real person, with the aim of distributing information to achieve a particular goal or for targeted trolling.

How to Spot Social Media Bots

Social media bots are increasingly difficult to identify as their algorithms become more sophisticated. For example, it was often easy to tell a bot account from a real account due to the lack of original posts—only resharing of other posts or adding hashtags to existing posts. However, more and more bots are able to post original content and replies.

According to the Atlantic Council’s Digital Forensic Research Lab, political bots share three characteristics across all types.

“Many of these bot and cyborg accounts do conform to a recognizable pattern: activity, amplification, anonymity. An anonymous account which is inhumanly active and which obsessively amplifies one point of view is likely to be a political bot, rather than a human,” the lab says in an article on spotting social media bots.

These traits are some of the major red flags that an account is likely a bot.

A few other signs that a social media account is actually a malicious bot or cyborg include:

- A recent account creation date

- The account shows coordinated resharing and amplifying of posts between a small network of accounts

- Unrealistically fast response times to others, indicating that the account is almost always online

- Low-quality comments with limited and repetitive vocabulary

- Usernames with long, random number sequences

- Stolen profile images from real people or “patriotic” profile images (such as flags, weapons, political symbols)

- A high volume of retweeted and shared content, with limited original posts

- Limited focus on content outside of a pre-defined set of hashtags and topics.

Real people tend to tweet on multiple topics, including more mundane posts such as how their day is going. They also do not post 24 hours a day at massive volumes.

4 Tools for Finding Social Media Bots

Since it’s becoming more difficult to tell bots from humans on social media, researchers and analysts have released a number of tools to better analyze accounts.

None of these tools are fool-proof. However, along with other types of observations, these tools can definitely help users better figure out the likelihood that an account is a bot or cyborg.

These tools focus on Twitter, where malicious bots are possibly the most prolific.

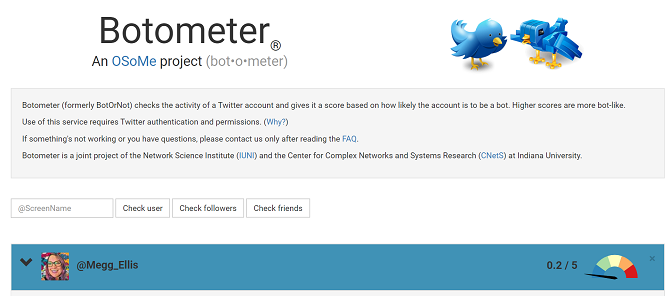

1. Botometer

Formerly called BotOrNot, Botometer is a tool created by a team at Indiana University. The tool uses an algorithm to determine the likelihood of an account’s automation.

With Botometer, you can not only check a Twitter account, but also the bot ratings of an account’s followers. Since bots often work within a network, amplifying the messages of each other, this is a helpful feature.

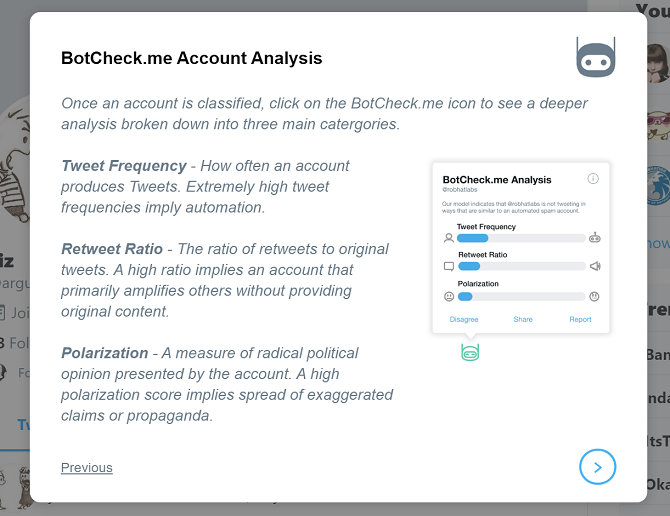

2. BotCheck.me

BotCheck.me is a browser extension that analyzes Twitter accounts to determine if they are propaganda bots. The company’s website also includes an analysis tool.

The tool considers factors such as post frequency, retweets, and polarizing language.

A great part of the tool is the ability to report whether BotCheck has incorrectly categorized an account.

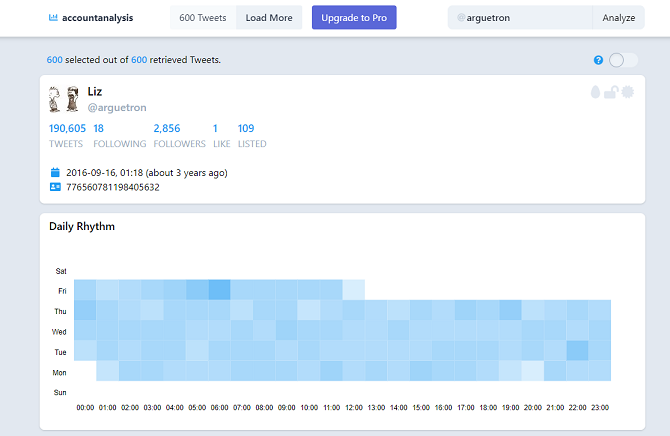

3. Account Analysis

Account Analysis is another tool that lets you analyze the activity of public Twitter accounts. Created by data analyst Luca Hammer, the tool gives insightful metrics and visualizations for account activity.

This helps you identify bot accounts that other tools might have missed. For example, in a test of a known bot account, multiple tools failed to identify the bot (due to its focus on posting tweets, lack of hashtags, and no retweets). However, the daily rhythm of the account’s posts (all day, every day) and the interface used by the account (the platform Cheap Bots, Done Quick!) confirm that the account is, in fact, a bot.

So while Account Analysis doesn’t assign a bot rating, it is still a useful tool to identify bot accounts.

4. Social Bearing

Social Bearing also provides a summary of statistics related to public Twitter accounts, similar to Account Analysis. This summary includes tweet frequency, retweets, replies, language sentiment, and more.

An overview of these statistics is incredibly useful in deciding whether an account may be a bot. Best of all, the tool is free and doesn’t require you to sign in with Twitter.

Don’t Trust It Just Because Someone Shared It

While bots are a major tool in the spread of misinformation and fake news, you also need to be wary of the information you consume outside of social media. After all, people also share and retweet fake news.

To raise your defenses against misinformation, check out our guide on how to avoid fake news

How to Quickly Avoid Fake News During an Unfolding Crisis

How to Quickly Avoid Fake News During an Unfolding Crisis

Next time there’s a crisis, don’t fall for any hype. Cut through the social scams and the lies to find the truth with these tips.

Read More

by looking for its telltale signs.

Image Credit: sdecoret/Depositphotos