A lot of my work is on large consumer facing sites. As such, they get a tremendous amount of long-tail traffic. That’s right, long-tail search isn’t dead. But you might think so when you look at Google Search Console.

I’ve found there’s more data in Google Search Console than you might believe. Here’s what I’m doing to track hidden long-tail search traffic.

Traffic Hazards

The first step in understanding how to track long-tail search is to make sure you’re not making mistakes in interpreting Google Search Console data.

Last year I wrote about the dangers of using the position metric. You can only use it reliably when looking at it on the query level and not the page level.

Today, I’m going the other direction. I’m looking at traffic by page but will be doing so to uncover a new type of metric – hidden traffic.

Page Level Traffic

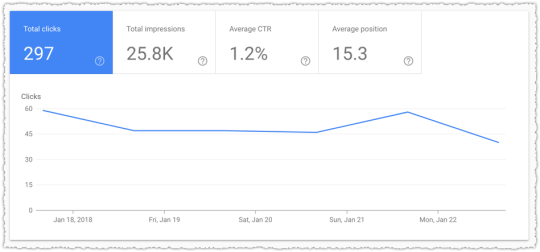

The traffic for a single page in Google Search Console is comprehensive. That’s all the traffic to a specific page in that time frame.

But a funny thing happens when you look at the query level data below this page level data.

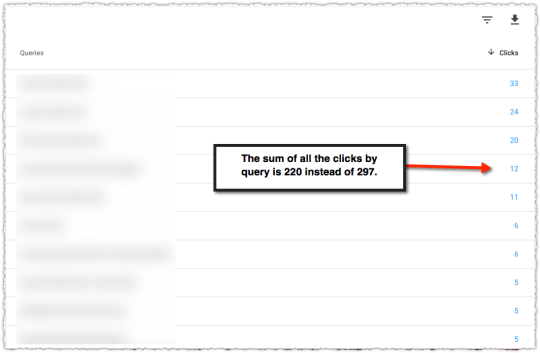

The numbers by query do not add up to the page level total. I know the first reaction many have is to curse Google and write off the data as being bad. But that would actually be a bad idea.

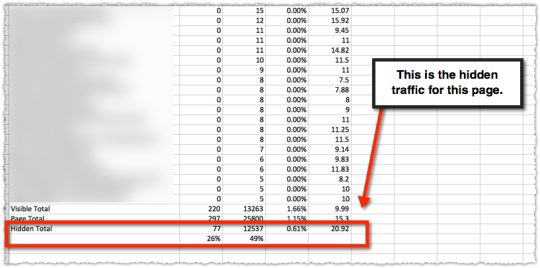

The difference between these two numbers are the queries that Google is suppressing because they are either too small and/or personally identifiable. The difference between the page total and visible total is your hidden long-tail traffic.

Calculating Hidden Traffic

Finding the amount of hidden long-tail traffic turns out to be relatively easy. First, download the query level data for that page. You’ll need to make sure that you don’t have more than 1,000 rows or else you won’t be able to properly count the visible portion of your traffic.

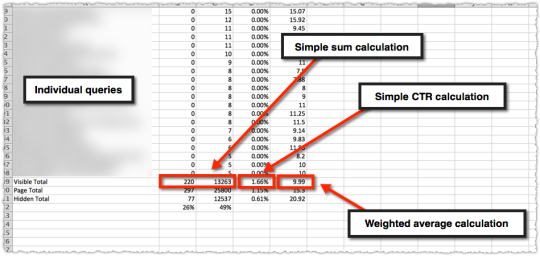

Once downloaded you calculate the visible total for those queries.

So you’ll have a sum of clicks, sum of impressions, a calculated clickthrough rate and then calculate a weighted average for position. The latter is what seems to trip a lot of folks up so here’s that calculation in detail.

=SUMPRODUCT(Ex:Ex,Cx:Cx)/SUM(Cx:Cx)

What this means is you’re getting the sum product of impressions and rank and then dividing that by the sum of impressions.

Next you manually put in the page total data we’ve been provided. Remember, we know this represents all of the data.

The clicks are easy. The impressions are rounded in the new Search Console. I don’t like that and I hope it changes. For now you could revert to the old version of search console if you’re only looking at data in the last 90 days.

(Important! The current last 7 days option in Search Console Beta is actually representative of only 6 days of data. WTF!)

From there I calculate and validate the CTR. Last is the average position.

To find the hidden long-tail traffic all you have to do is subtract the visible total from the page total. You only do that for clicks and impressions. Do not do that for CTR folks. You do the CTR calculation on the click and impression numbers.

Finally, you calculate the weighted position for the hidden traffic. The latter is just a bit of algebra at the end of the day. Here’s the equation.

=((C110*E110)-(C109*E109))/C111

What this is doing is taking the page total impressions * page total rank – visible page total impressions * visible page total rank and dividing that by the hidden page total impressions to arrive at the hidden page total rank.

The last thing I’ve done here is determine the percentage of clicks and impressions that are hidden for this page.

In this instance you can see that 26% of the traffic is hidden and … it doesn’t perform particularly well.

Using The Hidden Traffic Metric

This data alone is interesting and may lead you to investigate whether you can increase your long-tail traffic in raw numbers and as a percentage of total traffic. It can be good to know what pages are reliant on the more narrow visible queries and what pages draw from a larger number of hidden queries.

In fact, when we had full keyword visibility there was a very predictable metric around number of keywords per page that mapped to increases in authority. It still happens today, we just can’t easily see when it happens.

But one of the more interesting applications is in monitoring these percentages over time.

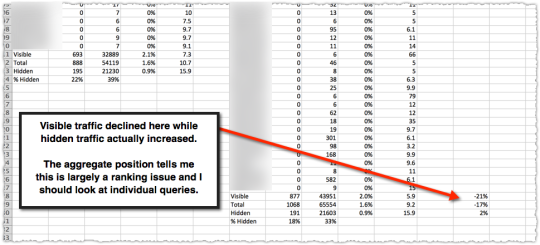

What happens to these metrics when a page loses traffic. I took two time periods (of equal length) and then determined the percentage loss for visible, total and hidden.

In this instance the loss was almost exclusively in visible traffic. The aggregate position number (dangerous to rely on for specificity but good for finding the scent of a problem) leads me to believe it’s a ranking problem for visible keywords. So my job is to look at specific keywords to find which ones dropped in rank.

What really got me curious was when the opposite happens.

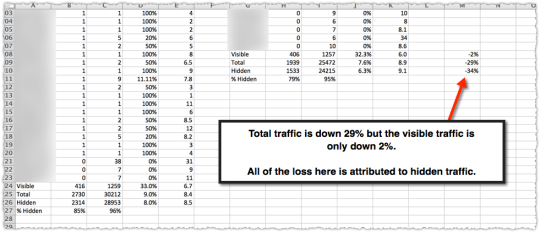

Here the page suffered a 29% traffic loss but nearly all of it was in hidden traffic. My job at that point is to figure out what type of long-tail queries suddenly evaporated. This isn’t particularly easy but there are clues in the visible traffic.

When I figured it out things got very interesting. I spent the better part of the last three months doing additional analysis along with a lot of technical reading.

I’ll cover the implications of changes to hidden traffic in my next post.

Caveats and Traps

This type of analysis is not particularly easy and it does come with a fair number of caveats and traps. The first is the assumption that the page level data we get from Google Search Console is accurate and comprehensive. I’ve been told it is and it seems to line up to Google Analytics data. #ymmv

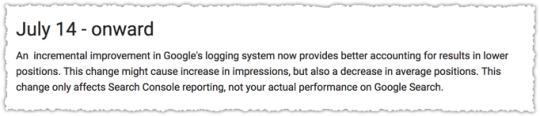

The second is that the data provided at the query level is consistent. In fact, we know it isn’t since Google made an update to the data collection and presentation in July of 2017.

Mind you, there were some other things that happened during that time and if you were doing this type of analysis then (which is when I started in earnest) you learned quite a bit.

You also must select a time period for that page that doesn’t have more than 1,000 visible queries. Without knowing the total visible query total you can’t calculate your hidden total. Finding the right timeframe can sometimes be difficult when looking at high volume pages.

One of the traps you might fall into is assuming that the queries in each bucket remain stable. That’s not always the case. Sometimes the composition of visible queries changes. And it’s hard to know whether hidden queries were promoted to visible or vice versa.

There are ways to control for some of this in terms of the total number of visible terms along with looking at not just the raw change in these cohorts but the percentage changes. But it can get messy sometimes.

In those situations it’s down to interpretation. Use that brain of yours to figure out what’s going on.

Next Steps and Requests

I’ve been playing with this metric for a while now but I have yet to automate the process. Adjacent to automation is the 1,000 visible query limit, which can be eliminated by using the API or tools like Supermetrics and/or Data Studio.

While performing this analysis on a larger set of pages would be interesting, I’ve found enough through this manual approach to keep me busy. I’m hopeful that someone will be excited to do the work to automate these calculations now that we have access to a larger data set in Google Search Console.

Of course, none of that would be necessary if Google simply provided this data. I’m not talking about the specific hidden queries. We know we’re never getting that.

Just give us a simple row at the end of the visible query rows that provides the hidden traffic aggregate metrics. An extra bonus would be to tell us the number of keywords that compose that hidden traffic.

TL;DR

You can track hidden long-tail search traffic using Google Search Console data with some straight-forward math. Understanding and monitoring hidden traffic can help diagnose ranking issues and other algorithmic shifts.

The Next Post:

The Previous Post: What I Learned in 2017